ScanRefer: 3D Object Localization in RGB-D Scans using Natural Language

European Conference on Computer Vision (ECCV), 2020.

Dave Zhenyu Chen1 Angel X. Chang2 Matthias Nießner1

1Technical University of Munich 2Simon Fraser University

Submit to our ScanRefer Localization Benchmark here!

Introduction

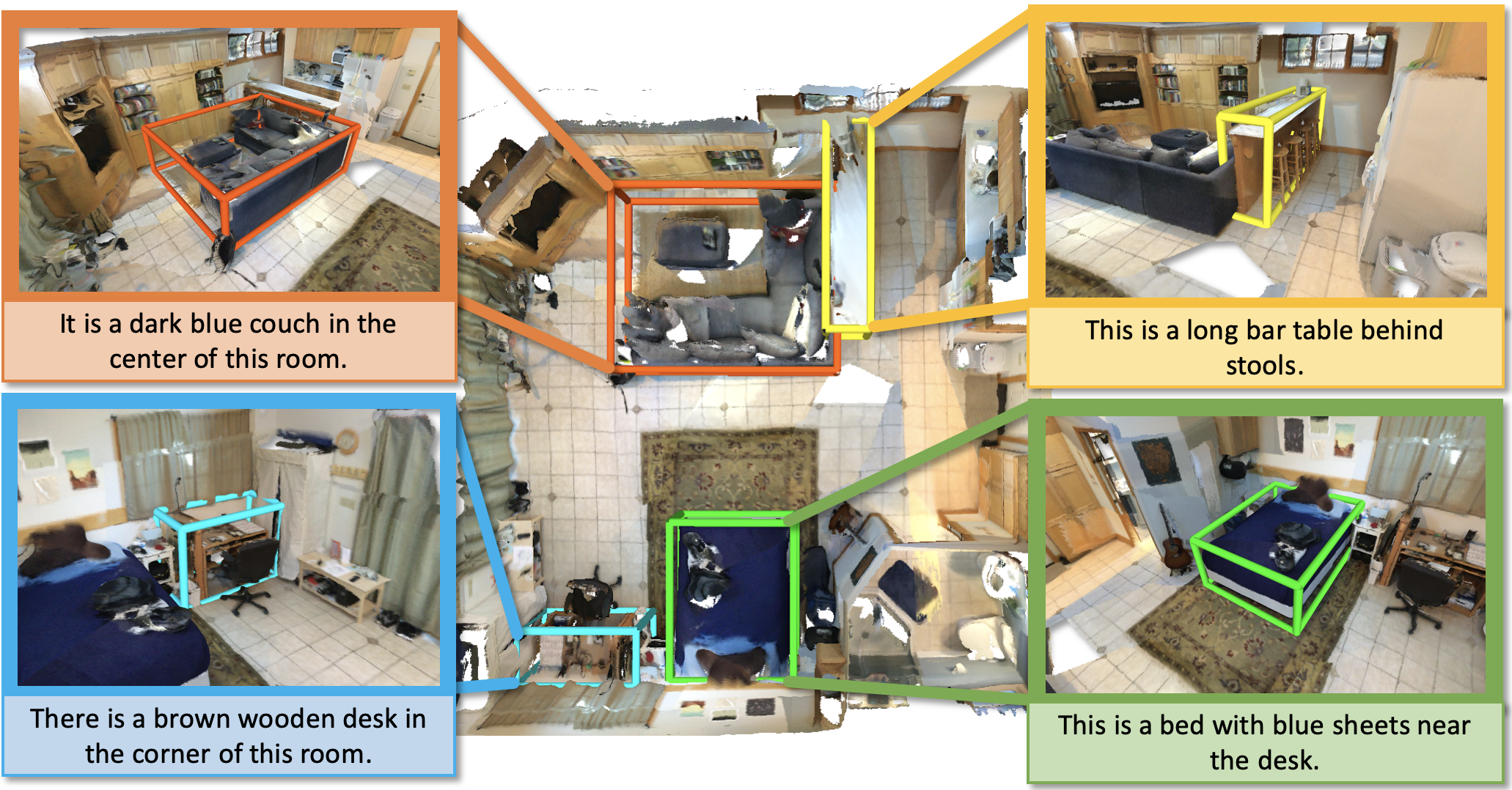

We introduce the task of 3D object localization in RGB-D scans using natural language descriptions. As input, we assume a point cloud of a scanned 3D scene along with a free-form description of a specified target object. To address this task, we propose ScanRefer, learning a fused descriptor from 3D object proposals and encoded sentence embeddings. This fused descriptor correlates language expressions with geometric features, enabling regression of the 3D bounding box of a target object. We also introduce the ScanRefer dataset, containing 51,583 descriptions of 11,046 objects from 800 ScanNet scenes. ScanRefer is the first large-scale effort to perform object localization via natural language expression directly in 3D.

Video

Browse

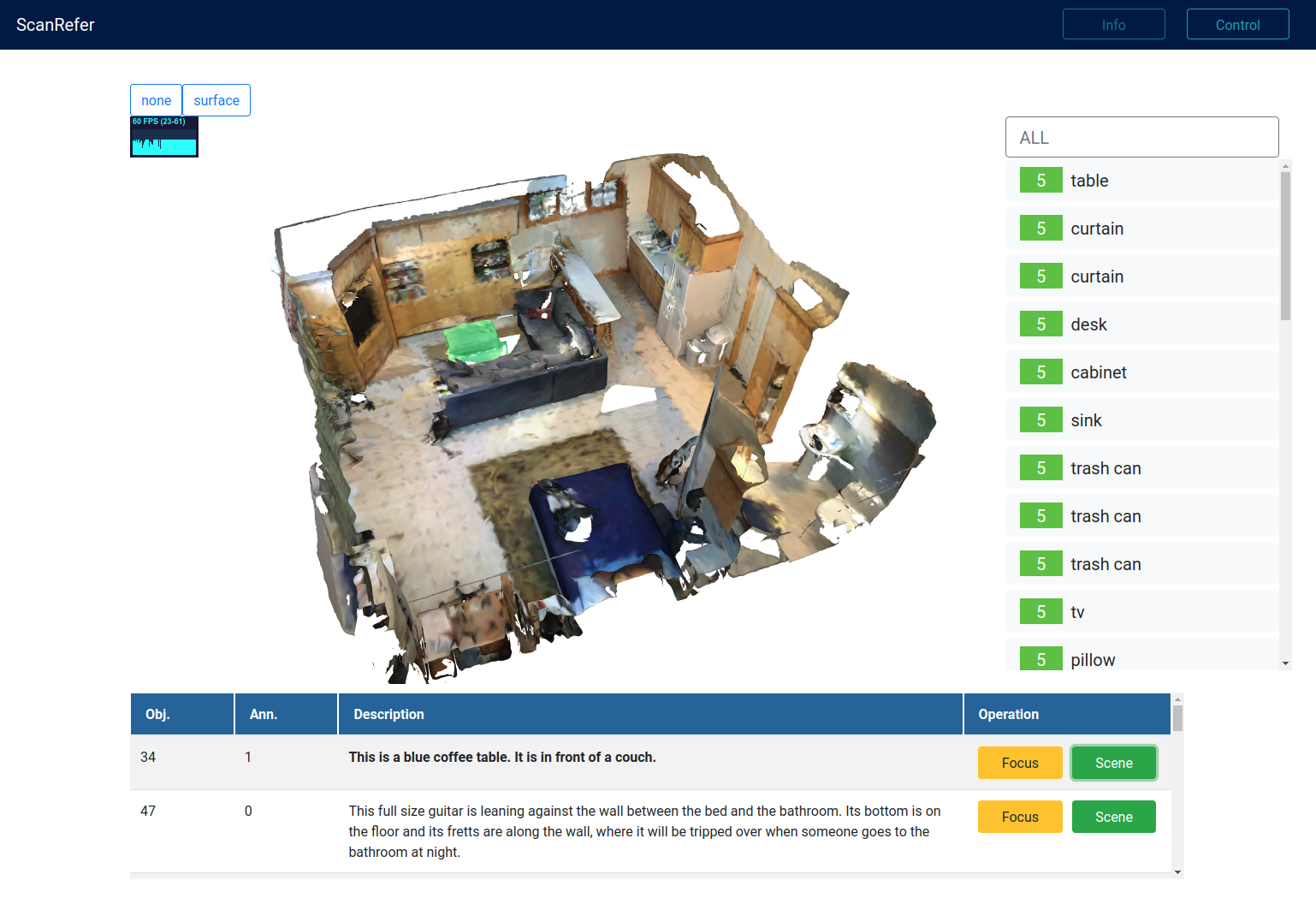

The ScanRefer data can be browsed online in your web browser. Learn more at the ScanRefer Data Browser.

(For a better browsing experience, we recommend using Google Chrome.)

Publication

European Conference on Computer Vision (ECCV), 2020.Paper | arXiv | Code

If you find our project useful, please consider citing us:

@article{chen2020scanrefer,

title={ScanRefer: 3D Object Localization in RGB-D Scans using Natural Language},

author={Chen, Dave Zhenyu and Chang, Angel X and Nie{\ss}ner, Matthias},

journal={16th European Conference on Computer Vision (ECCV)},

year={2020}

}